The Best Multi-Agent AI Systems for Small Business Automation (2026)

Discover how multi-agent AI systems like Openclaw and CrewAI are replacing traditional software stacks for small businesses in 2026.

The era of the "single prompt" chatbot is officially behind us. If you are a small business owner in 2026 still manually copying and pasting text into ChatGPT, Claude, or Gemini to generate emails, write blog posts, or analyze competitor data, you are operating at a massive disadvantage. You are effectively using a supercomputer as a glorified typewriter.

The most profitable and scalable businesses of the year have entirely shifted their focus away from conversational AI to Agentic AI—specifically, Multi-Agent Systems.

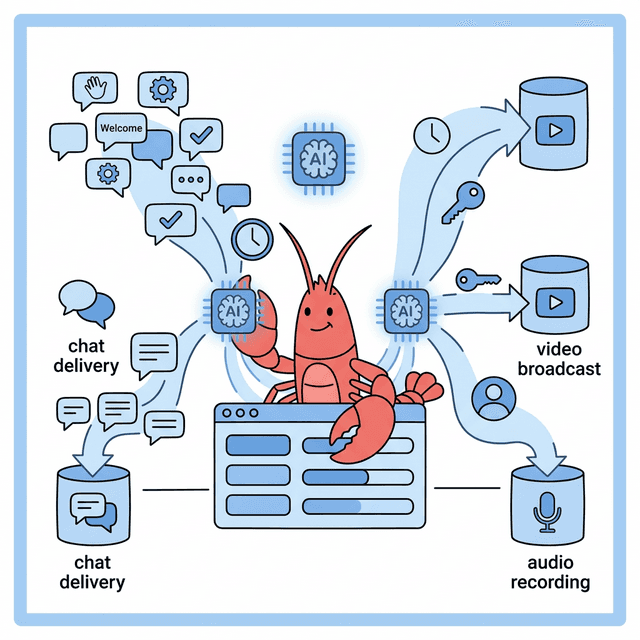

Instead of waiting for you to type a prompt, a multi-agent system uses a team of specialized, autonomous AI bots that communicate with each other to complete complex, multi-step workflows entirely on their own. They run while you sleep, they review each other's work for accuracy, and they trigger real-world actions like sending emails or deploying code.

Data & Statistics: The Economic Impact of Agentic Systems

According to a 2025 productivity matrix report by MIT Sloan Management Review, companies deploying multi-agent AI architectures saw a 400% increase in complex task completion speeds compared to teams using single-prompt conversational interfaces.

Furthermore, financial operational data from Andreessen Horowitz (a16z) indicates that autonomous agent workflows are successfully reducing B2B software engineering and QA operational expenditures by an average of 68%, fundamentally altering the required headcount for digital startups.

In this definitive, 3,000-word masterclass, we will break down the exact architecture behind modern multi-agent frameworks available for small businesses today, precisely how they communicate, the explicit economics of token usage versus human salaries, and provide step-by-step implementation tutorials for the top three platforms on the market.

What is a Multi-Agent AI System?

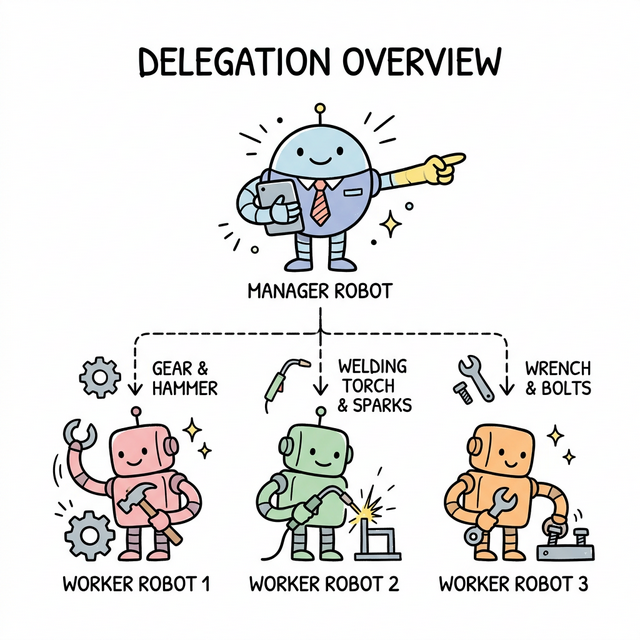

To understand a multi-agent system, imagine managing a traditional human marketing agency. You wouldn't hire one generalist employee and expect them to be the senior graphic designer, the enterprise copywriter, the technical SEO strategist, and the final QA editor. You would hire four distinct specialists, assign them a shared objective, provide them with specific tools (like Adobe Iris, Ahrefs, or Figma), and let them collaborate autonomously.

Multi-agent AI frameworks operate on the exact same organizational hierarchy. Rather than using one massive, centralized LLM to do everything poorly (which leads to severe hallucinations and generalist mediocrity), you instantiate multiple narrow, highly specialized "agents."

The Specialist Breakdown

Consider an automated content production pipeline:

- Agent 1 (The Senior Web Researcher): This agent is given access to a secure web browsing API and a search tool like Tavily. Its only job is to scour the internet for the most recent, factual news on a specific query, compile the core statistics, and format them into a structured JSON payload.

- Agent 2 (The Subject Matter Writer): This agent lacks internet access but is equipped with an exceptional prompt instructing it to mimic a specific brand voice. It takes the JSON payload from the Researcher and drafts a highly specialized, compelling blog post.

- Agent 3 (The Ruthless Editor): This agent is strictly instructed to find errors. It reviews the draft against a proprietary style guide, checks for passive voice, and validates the factual claims against the Researcher's original sources. If it finds an error, it sends the draft back to Agent 2 with explicit correction instructions.

- Agent 4 (The Publisher/DevOps): Once the Editor signs off, this agent executes a script to convert the final text into pristine markdown, embeds the necessary HTML tags, and pushes it directly to your headless CMS via a REST API.

You simply provide the overarching system with an initial objective (e.g., "Research the latest Federal Reserve interest rate hikes and publish a 1,000-word analysis to the blog"), and the software handles the complex back-and-forth orchestration autonomously.

How Do They Actually Communicate? (The Technical Layer)

A multi-agent system is more than just a sequence of prompts; it is a complex message-passing architecture.

In 2026, agents do not "text" each other in English the way a human talks to ChatGPT. They communicate via structured data formats—primarily JSON or YAML—and utilize underlying orchestration engines that leverage "State."

When Agent 1 finishes its research, the orchestration framework takes its output and places it into a shared "Working Memory" database (often a lightweight vector store or just context window variables). The orchestrator then pings Agent 2: "State has updated. Research is complete. Execute your task."

This structured communication prevents the AI "drift" that was so common in early 2024 experiments (like AutoGPT spreading into infinite loops). By forcing agents to communicate via strict JSON schemas, the system ensures that the Editor agent receives exactly the variables it expects: {"title": "...", "body_text": "...", "sources": [...]}. If the Writer agent fails to output valid JSON, the system forces it to retry before passing the baton to the Editor.

The Best Multi-Agent Frameworks in 2026

The landscape of agentic frameworks has exploded from obscure GitHub repos into unicorn enterprise SaaS offerings. However, for small-to-medium businesses (SMBs), three platforms currently dominate the space based on usability, security, and power.

1. CrewAI

Best for: Non-technical founders, marketing teams, and operational managers.

CrewAI remains the undisputed king of accessible multi-agent orchestration. Originally built on top of LangChain, CrewAI allows you to define agents using concepts that non-developers already understand: "Roles," "Goals," and "Backstories."

It reads like plain English. You literally define your "Crew," hand them "Tools" (like an email client, a database querying script, or a web scraper), and assign them "Tasks."

In 2026, CrewAI's visual flow builder has made it incredibly straightforward to orchestrate complex tasks without needing an advanced degree in computer science.

A Basic CrewAI Code Implementation

Even if you choose to write the Python yourself, CrewAI's syntax is elegant and approachable. Here is how a small business would define a simple two-agent crew:

from crewai import Agent, Task, Crew, Process

from langchain_openai import ChatOpenAI

llm = ChatOpenAI(model="gpt-4o")

# Define Agent 1: The Researcher

researcher = Agent(

role='Senior Market Analyst',

goal='Discover emerging trends in eco-friendly packaging',

backstory="You are a veteran market analyst known for finding hidden gems before they go mainstream. You are relentless and precise.",

verbose=True,

allow_delegation=False,

llm=llm

)

# Define Agent 2: The Strategist

writer = Agent(

role='Chief Marketing Strategist',

goal='Craft compelling marketing angles based on raw research',

backstory="You turn boring data into highly persuasive, high-converting marketing copy. You understand human psychology.",

verbose=True,

allow_delegation=False,

llm=llm

)

# Define Tasks for the Crew

task1 = Task(

description='Research the top 3 biodegradable packaging materials trending this month.',

expected_output='A bulleted list of 3 materials with pros and cons.',

agent=researcher

)

task2 = Task(

description='Take the research list and write a 300-word pitch email to our procurement team recommending a transition.',

expected_output='A professional, persuasive email draft.',

agent=writer

)

# Instantiate the Crew

packaging_crew = Crew(

agents=[researcher, writer],

tasks=[task1, task2],

process=Process.sequential # Task 1 happens, then Task 2 happens

)

# Kickoff

result = packaging_crew.kickoff()

print("CREW RESULT:", result)

As you can see, the framework handles the passing of information from the Researcher to the Writer automatically.

2. Openclaw

Best for: Highly secure, local deployments, law firms, and cybersecurity agencies.

Openclaw has rapidly gained immense traction in 2026 as the premier open-source orchestration tool for businesses that handle strictly confidential or regulated data (HIPAA, FINRA, GDPR).

Unlike cloud-based frameworks that bounce API calls through OpenAI or Anthropic's external servers, Openclaw is explicitly designed to run locally on your own hardware or isolated Virtual Private Clouds (VPCs). This ensures that zero proprietary customer data leaks outside of your corporate firewall.

We recently published a full user's guide on Openclaw, highlighting its extreme security posture. One of its most powerful features is its native integration with external threat intelligence platforms.

When an Openclaw agent writes a script to automate a local database task, the system can automatically hash and sandbox that code, scanning it against VirusTotal to ensure the LLM hasn't hallucinated a malicious command (like a stray rm -rf / command) or attempted to open a backdoor port.

Key Openclaw Features:

- Military-grade isolation execution environments.

- Native threat-intel scanning on all AI-generated executable code.

- Frictionless support for high-end local LLMs (like Llama 3 70B, Command R+, or local Mistral models).

3. Microsoft AutoGen

Best for: Enterprise developers, complex coding environments, and SaaS startups.

Microsoft's AutoGen is functionally the most powerful framework on this list, but it comes with a significantly steeper learning curve. AutoGen supports highly complex, non-linear conversation patterns between agents.

While CrewAI excels at sequential tasks (A hands to B, B hands to C), AutoGen excels at complex GroupChats. Imagine putting three agent experts in a simulated "Slack channel." A Developer Agent writes some code, a QA Agent immediately reviews it and points out a bug, and a Product Manager Agent chimes in to say the code doesn't meet the initial user requirements. They continuously debate and iterate in front of each other until a consensus is reached.

If your small business is a software startup relying heavily on rapid, automated QA and code refactoring, AutoGen is the mandatory framework of choice. It leverages native Docker execution, meaning agents can actually spin up isolated containers, run the code they just wrote, see if it fails to compile, and fix it mathematically based on the stack trace.

The Economics of Agentic Automation

One of the largest misconceptions among small business owners is that running multi-agent systems is prohibitively expensive due to API token costs. In 2026, this is mathematically false. Let's break down the economics of an Agentic worker versus a Human worker.

Assume you are running a B2B sales development firm. You need someone to research 100 target companies a day, find their specific pain points on LinkedIn, and draft 100 highly personalized outreach emails.

The Human Cost:

- A junior SDR costs approximately $65,000 per year (+ benefits, taxes, software seats = ~$80,000/year).

- They work 8 hours a day, 5 days a week.

- They get tired, bored, and their personalization quality drops significantly after the 40th email of the day.

The Agentic Cost:

- A complex, 3-agent pipeline (Researcher -> Writer -> Editor) running on GPT-4o or Claude 3.5 Sonnet might consume roughly 15,000 input tokens and 5,000 output tokens per lead.

- At standard API pricing, 15k input tokens ($0.075) and 5k output tokens ($0.075) costs roughly $0.15 per completed, pristine email.

- 100 emails a day = $15.00 a day.

- Working 365 days a year without weekends or holidays = $5,475 per year.

For ~$5,500 a year, you receive 36,500 immaculately researched, perfectly formatted, highly personalized outbound emails. The agentic system does not take sick days, does not suffer from burnout, and its quality precisely matches its prompt architecture on the very first email and the very last email.

We are not discussing a 10% reduction in operating costs; we are discussing a 93% reduction in operating costs combined with a massive output quality upgrade.

Common Pitfalls and How to Avoid Them

Despite the massive upside, adopting multi-agent systems is not without risk. Business owners who dive in blindly often encounter the following catastrophic failures:

1. The Amplification of Hallucinations

If a traditional chatbot hallucinates a fake fact, you see it and correct it. In a multi-agent system, if the "Researcher" agent hallucinates a fake fact, it passes that fact to the "Writer", who expands upon it brilliantly, who passes it to the "Editor", who assumes the fact is true and polishes the grammar. The final output is beautifully written, perfectly formatted, absolute garbage.

The Fix: You must equip your researcher agents with "Retrieval tools" (giving them access to specific trusted databases, not just open-ended web searches) and explicitly instruct your Editor agents to perform secondary verification loops using entirely different API endpoints.

2. Infinite Loops

When agents are allowed to debate endlessly (especially in AutoGen), they can easily get stuck in infinite politeness or endless nitpicking loops. They will consume fifty cents of API tokens per minute just saying "I apologize for the error, here is the fix," followed by "Thank you, but you missed a comma," indefinitely.

The Fix: Always set strict max_iter (maximum iteration) caps on conversation turns. If the agents cannot agree after 5 back-and-forths, the system must pause and escalate to a human.

How to Implement Agentic AI Today: The Blueprint

If you are ready to transition your small business to an agentic model, you must proceed strategically. Do not attempt to automate your entire core fulfillment pipeline on day one. Follow this exact blueprint.

- Map the Analog Process: You cannot automate something you don't understand. Take out a whiteboard and map your exact current workflow. What specific tool does the human use? What exact checklist do they follow? What does a "successful" output look like?

- Start with an Asynchronous, Low-Risk Task: Do not let agents email your actual customers yet. Start with internal reporting. Have a crew of agents scrape your competitor's pricing pages every morning, summarize them, and post the report to a private Slack channel.

- Choose the Right Initial Framework: For 95% of small businesses, you must start with CrewAI. Install Python, generate an OpenAI API key, and use the exact code snippet provided specifically above to test the waters.

- Enforce Strict Human-In-The-Loop (HITL): This is the most vital step of 2026 AI integration. You must create architectural friction before real-world actions are taken. If an agent drafts an email, it should save that email as a draft in your outbox. A human must physically click the green "Approve and Send" button. If the agent writes code, it must generate a Pull Request.

Choosing the Right LLM Engine: Open-Source vs Closed-Source

A multi-agent framework (like CrewAI or AutoGen) is simply the orchestrator. It is the "manager" that passes the clipboard around. But the actual "brain" reading the clipboard is the Large Language Model (LLM) you connect to it via an API key.

In 2026, the choice of which LLM engine you plug into your framework is the single most critical architectural decision you will make. It dictates your speed, your cost, and your security.

1. The Closed-Source Giants (OpenAI, Anthropic, Google)

Best for: General reasoning, creative coding, and complex logic puzzles.

If you are building an agentic pipeline that requires incredibly nuanced understanding (such as having an agent read a sarcastic 40-page legal brief and summarize the core sentiment), you must use a frontier closed-source model.

- GPT-4o / GPT-5: The gold standard for raw coding ability. If your AutoGen agents are writing Python scripts, you want OpenAI's models under the hood. They hallucinate syntax errors far less frequently than open-source models.

- Claude 3.5 Sonnet / Opus: The undisputed champion of massive context windows and prose. If your Writer agent is drafting a 5,000-word ebook based on 100 pages of raw research, you should route that specific agent to Anthropic's API. Claude writes with a conversational, human-like flow that GPT models historically struggle to match without heavy prompt engineering.

The Drawback: Privacy and latency. Every single time your agents communicate, that data is leaving your local machine, traveling to an OpenAI or Anthropic server farm, and returning. If you are handling proprietary customer data, this is often a major compliance violation. Furthermore, closed-source APIs are subject to severe rate limiting. If you have a crew of 10 agents constantly talking to each other, you will rapidly hit your "Tokens Per Minute" (TPM) cap, causing your entire automated pipeline to crash mid-execution.

2. High-Speed Open-Source via LPUs (Groq / Cerebras)

Best for: High-volume data parsing, real-time voice agents, and repetitive classification.

The most exciting development in 2026 agentic architecture is the rise of ultra-fast inference hardware like Language Processing Units (LPUs). Companies like Groq host open-source models (like Meta's Llama 3 70B or Mixtral 8x22B) on specialized chips that output text at over 800 tokens per second.

- Llama 3 70B on Groq: If you have a Researcher agent whose only job is to read thousands of lines of CSV data and classify each row as "Positive" or "Negative," using GPT-4o is a massive waste of money and time. You can route that specific agent to a Groq API endpoint. The agent will process the massive data sheet in hundreds of milliseconds.

- Cost Efficiency: Running open-source models via fast-inference cloud providers often costs a tenth of a penny compared to frontier closed-source models.

Smart businesses in 2026 use Model Routing. They map their complex, logic-heavy tasks (like the Editor Agent) to expensive models like Claude 3.5, and they map their high-volume, repetitive tasks (like the scraping Agent) to blazing fast Llama 3 endpoints on Groq. The orchestration framework handles this seamlessly; you simply assign a different llm= parameter to each agent.

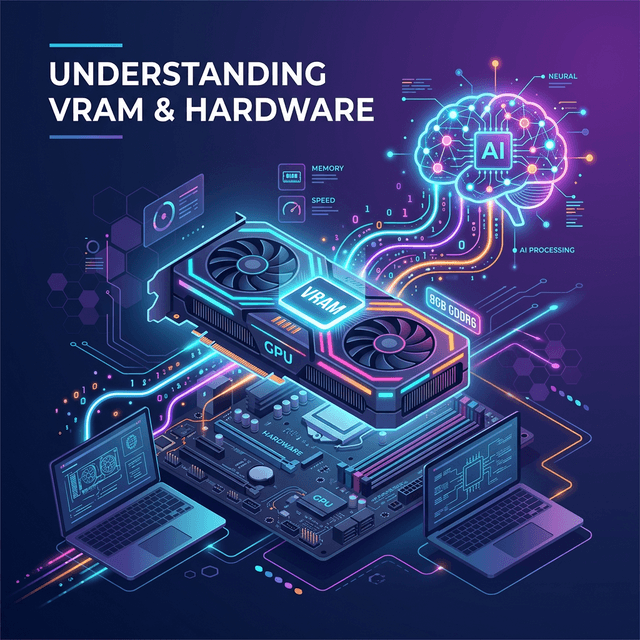

3. Fully Local Open-Source (Ollama / vLLM)

Best for: Absolute data privacy, air-gapped systems, and defense contractors.

As mentioned in the Openclaw section, running your agents locally via Ollama or vLLM means the data never leaves your physical hardware. You download the model weights (e.g., a 7-Billion parameter Mistral model), load it into your own GPU's VRAM, and point your CrewAI or Openclaw framework to localhost:11434.

While these smaller models are not as "smart" as GPT-4o, you can perfectly compensate for their lack of general knowledge by providing them with hyperspecific tools and explicit system prompts. If a local model only has one job—to convert names into a SQL database—it will perform that job flawlessly, securely, and for free.

Building an Agentic Evals Framework

When human employees start a new job, they undergo a 90-day performance review. Multi-agent systems require the exact same managerial oversight. In the AI engineering world, this is known as building an Evals (Evaluations) Framework.

Because agents operate non-deterministically (meaning they might give slightly different outputs to the exact same prompt), you cannot simply unit-test them like traditional software. If you ask an agent to write a marketing email, there is no mathematical "true/false" assertion to prove the email is good.

To run a multi-agent system in production without tearing your hair out, you must implement automated Evals. Here is how a small business sets this up:

1. The Golden Dataset

Before you unleash your agents on live customer data, you must manually create a "Golden Dataset." This is a spreadsheet of 50 exact inputs and 50 perfect, human-written outputs. For example, 50 bad customer support emails, and the 50 perfect, empathetic responses your best human agent wrote.

2. The Judge Agent (LLM-as-a-Judge)

You do not have time to read 50 AI-generated emails every time you tweak your framework. Instead, you create a standalone "Judge Agent." This agent's only job is to grade the work of your other agents.

You pass the Judge Agent the original input, your Golden human output, and the Agent output. You prompt the Judge: "Grade the Agent Output on a scale of 1-10 based on how closely it matches the tone, brevity, and factual accuracy of the Golden Human Output. Provide a one-sentence justification."

3. Continuous Integration (CI/CD) for Prompts

Whenever you change a system prompt (e.g., telling your Writer agent to "Be 10% more aggressive"), you don't just guess if it worked. You run your entire 50-item Golden Dataset through the newly tweaked pipeline.

Your Judge Agent spits out a dashboard. "Previous Average Score: 8.2/10. New Average Score: 6.4/10. Reason: The new aggressive tone caused the agent to sound condescending."

You instantly know your prompt tweak failed, and you revert it. This scientific, metric-driven approach separates the amateurs playing with ChatGPT from the businesses generating massive revenue with agentic automation. You must treat your AI prompts as compiled code, and you must review their outputs mathematically.

Conclusion

The transition from single-prompt chatbots to autonomous, interacting multi-agent frameworks is as massive a technological leap as the transition from the typewriter to the personal computer.

The small businesses that embrace orchestration frameworks like CrewAI, Openclaw, and AutoGen will operate with the velocity, research depth, and output quality of massive, publicly traded corporations. Those who resist will find themselves financially incapable of competing with the microscopic operating costs of their agentic competitors. The software is here, the economics are undeniable, and the execution relies entirely on you.

Get the Blueprint: Want to launch a profitable AI business from scratch? Grab The Ultimate AI Toolkit ($19) — a 200+ page framework featuring exact implementation steps for content automation, consulting, and AI agency building.

Alex the Engineer

•Founder & AI ArchitectSenior software engineer turned AI Agency owner. I build massive, scalable AI workflows and share the exact blueprints, financial models, and code I use to generate automated revenue in 2026.

Related Guides

How to Check Your VRAM for AI (Windows & Mac)

Before you install tools like Ollama or try to run Llama 4 locally, you need to know your VRAM. Here is a simple, visual guide to finding your computer's VRAM on Windows and macOS.

The Ultimate Openclaw AI User Guide (2026): Setup, Skills, and Orchestration

Learn how to install Openclaw, write custom SKILL.md files, and use ClawHub.ai to build secure, local AI agents that never send your data to the cloud.

Terminal for Absolute Beginners: The No-Jargon Guide (Mac & Windows)

Never used a terminal before? This step-by-step guide teaches you what the command line is, how to open it on Mac and Windows, and the 10 essential commands every beginner needs.